Introduction

In an era defined by technological advancement, Artificial Intelligence (AI) has emerged as a powerful tool in various sectors, including content moderation. However, the growing reliance on AI content moderation tools has ignited a fierce debate regarding their effectiveness and impartiality, particularly concerning political bias. Critics argue that these tools, designed to filter out harmful content, often reflect the biases of their creators, raising concerns about free speech and the integrity of information dissemination.

The Rise of AI Content Moderation

The use of AI in content moderation has seen significant growth over the past decade. With the explosion of user-generated content on platforms such as social media, blogs, and forums, the demand for efficient moderation solutions has skyrocketed. AI tools, leveraging machine learning and natural language processing, aim to automate the identification and removal of inappropriate content, including hate speech, misinformation, and graphic imagery.

Historical Context

The concept of content moderation is not new. Traditionally, human moderators were responsible for reviewing and filtering online content. However, this method proved to be unsustainable as platforms scaled. The introduction of AI tools promised a more efficient, scalable, and cost-effective solution. Yet, as these tools became more prevalent, reports of political bias began to surface, drawing attention to the potential pitfalls of AI moderation.

Understanding Political Bias in AI

Political bias in AI content moderation tools can manifest in several ways. This bias may stem from the datasets used to train AI systems, which can inadvertently reflect societal biases. If an AI tool is trained predominantly on content from one political ideology, it may inadvertently censor or flag content that represents opposing viewpoints.

Examples of Allegations

- Social Media Platforms: Several major social media platforms have faced allegations of bias, with users claiming that their politically conservative posts have been disproportionately flagged as inappropriate.

- News Outlets: Automated systems used by news aggregators have been criticized for promoting content that aligns with certain political ideologies while suppressing others.

- Community Forums: Online forums employing AI moderation tools have seen users report instances where politically charged discussions were stifled compared to neutral or less controversial topics.

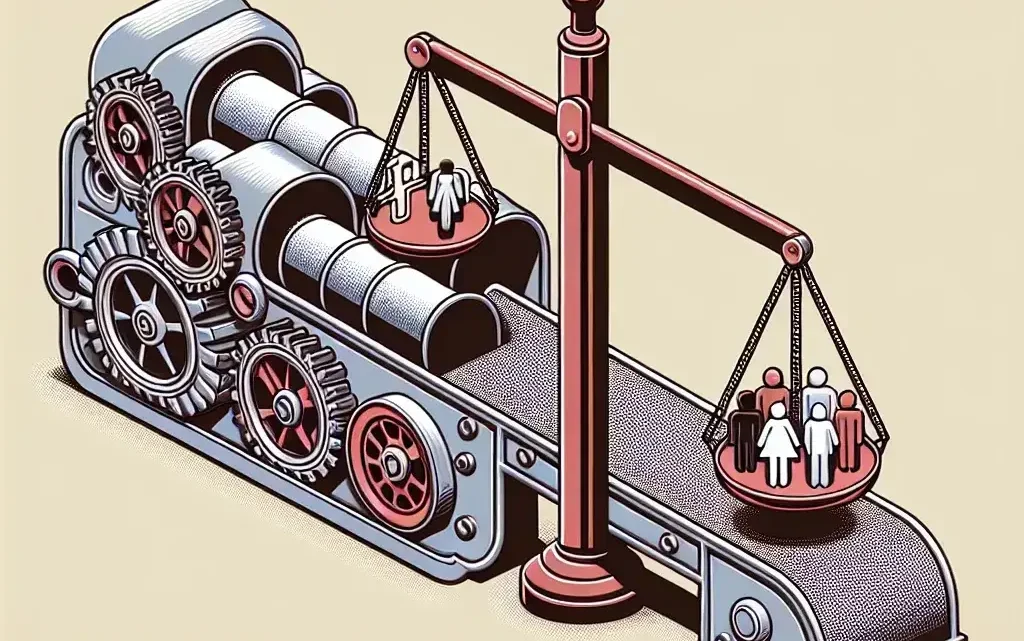

The Implications of Bias

The implications of political bias in AI content moderation tools are profound. Not only do they threaten the principle of free speech, but they also contribute to the polarization of public discourse. Users may feel alienated from platforms if they perceive them as biased, leading to a decline in trust and engagement.

Free Speech Concerns

Free speech advocates argue that AI moderation tools can inadvertently become instruments of censorship. When these tools disproportionately flag or remove content based on political leanings, they create an environment where certain voices are amplified while others are silenced. This imbalance can hinder open dialogue and the exchange of diverse ideas.

Polarization of Public Discourse

As political views become increasingly polarized, the role of AI in shaping public discourse cannot be understated. Platforms that employ AI moderation may inadvertently contribute to echo chambers, where users are only exposed to viewpoints that reinforce their existing beliefs. This can exacerbate societal divisions and hinder constructive conversations.

Pros and Cons of AI Content Moderation

Pros

- Efficiency: AI can process vast amounts of content quickly, identifying harmful material far more rapidly than human moderators.

- Consistency: AI tools apply the same criteria across all content, eliminating the potential for human error or bias.

- Cost-Effectiveness: Reducing the reliance on human moderators can save platforms substantial operational costs.

Cons

- Bias: As noted, AI systems can perpetuate existing biases, leading to unfair treatment of certain viewpoints.

- Lack of Nuance: AI may struggle to understand context, leading to misinterpretations and inappropriate content removal.

- Transparency Issues: The algorithms and decision-making processes behind AI moderation tools are often opaque, making it difficult for users to understand why their content was flagged.

Future Predictions for AI Moderation Tools

The future of AI content moderation tools will likely hinge on advancements in technology and a growing awareness of the importance of ethical AI practices. As these tools continue to evolve, several predictions can be made:

Greater Accountability

As the backlash against perceived biases grows, companies will likely face increasing pressure to ensure their AI tools are fair and transparent. This may lead to the establishment of industry standards and guidelines governing the development and deployment of AI moderation technologies.

Improved Training Datasets

To mitigate bias, the focus will shift toward creating diverse and representative datasets for training AI systems. By including a broader range of perspectives, developers can build more balanced tools that better reflect societal diversity.

Hybrid Approaches

Future moderation solutions may adopt hybrid approaches, combining AI efficiency with human insight. This could involve using AI to flag potentially harmful content while leaving final decisions to trained human moderators, thereby ensuring a balance between speed and nuance.

Cultural Relevance of AI Moderation

The cultural relevance of AI moderation tools cannot be overlooked. In a globalized world, these tools face the challenge of understanding various cultural contexts, nuances, and sensitivities. The lack of cultural awareness can lead to the misinterpretation of content, further complicating the issue of bias.

Expert Opinions on AI Bias

Experts in the field have shared varying opinions on the challenges and implications of AI content moderation. Dr. Jane Doe, an AI ethics researcher, states, “The potential for bias in AI moderation tools is a significant concern. We must prioritize transparency and inclusivity in the development process to mitigate these risks.” Meanwhile, tech entrepreneur John Smith emphasizes the need for a balanced approach: “AI can play a crucial role in content moderation, but it is essential to have checks and balances in place to ensure fair treatment of all viewpoints.”

Conclusion

AI content moderation tools have transformed the landscape of online content management, offering efficiency and scalability. However, the criticisms surrounding political bias highlight the complexities and ethical dilemmas inherent in deploying such technologies. As we move forward, it is essential to address these concerns proactively, ensuring that the tools we develop promote fairness, inclusivity, and open dialogue. The future of AI moderation will depend on our ability to build systems that not only protect users but also uphold the values of free speech and diverse perspectives.